Changes of America in the 1920s Essay - 723 Words.

After a brief post-war economic recession from 1920-1, the USA’s economy boomed and so began the age of consumerism. The USA prospered and good times lay ahead. America had become without doubt the richest country in the world and throughout the 1920s there was an almost unchecked economic boom. Major cities such as New.

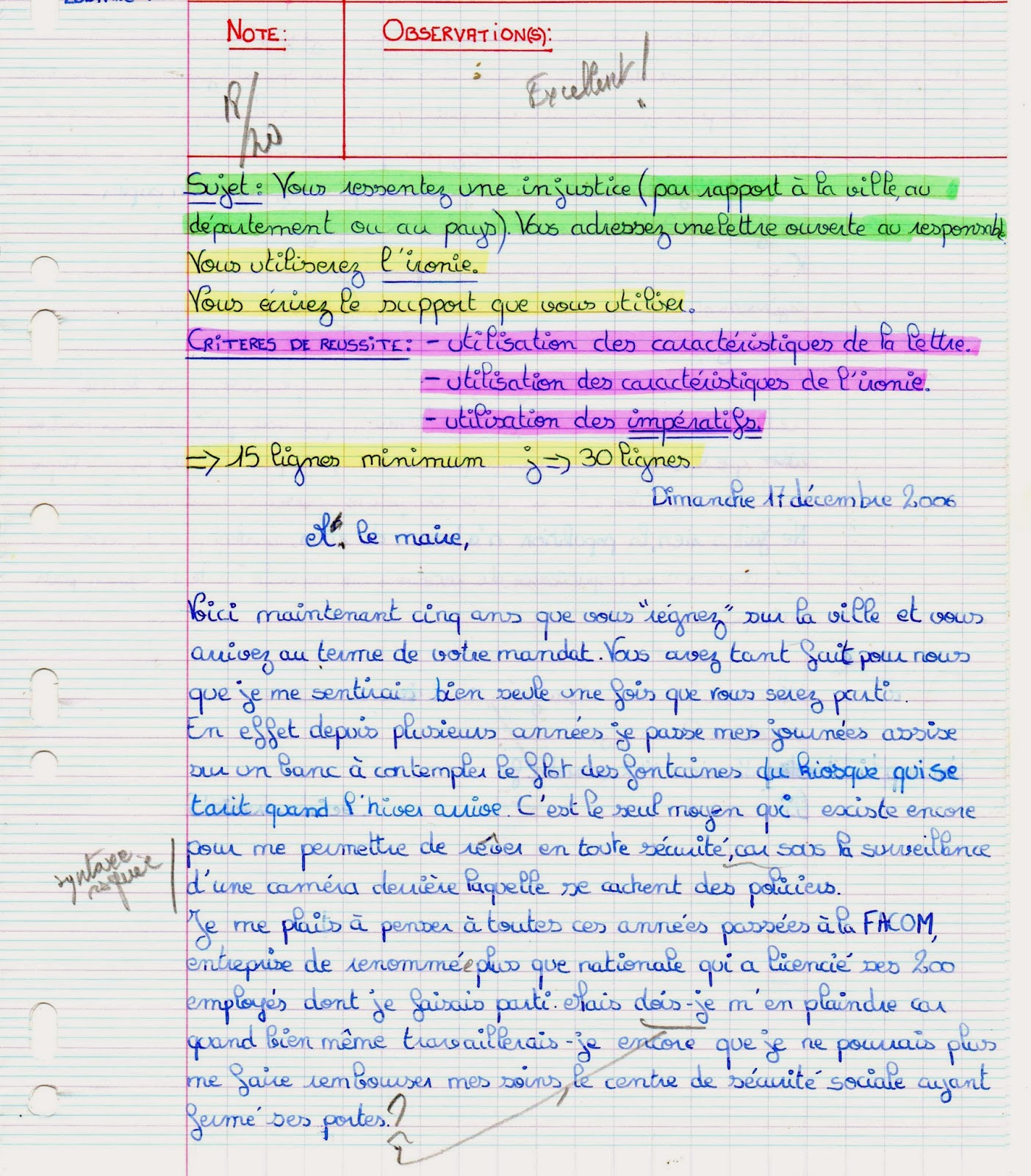

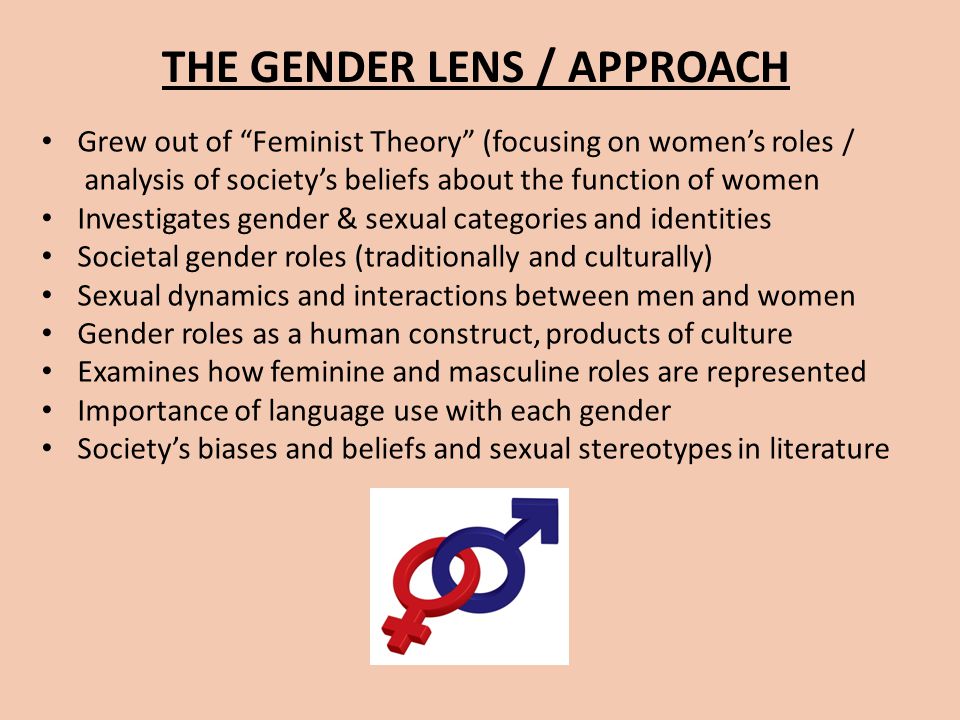

However you look at it, the 1920s was a decade that made life better for women in terms of political and personal freedom. Throughout the history women in America, women have been excluded from the politics and decision making in the society, but that began to slowly change as the United States approached the Roaring Twenties.

Automobiles as a Symbol of Prosperity in 1920’s America The automobile was one of the biggest and most important features of the 1920’s. Automobiles not only were a symbol of social status, but also had become so popular that nearly every family owned a car.

Comparison Between 1920s and 1930s The 1920’s was the first decade to have a nickname such as “Roaring 20’s” or “Jazz Age.” For many Americans, the 1920’s was a decade of prosperity and confidence.But for others this decade seemed to bring cultural conflicts, nativists against immigrants, religious liberals against fundamentalists and rural provincials against urban cosmopolitans.

Prohibition in the United States was a nationwide constitutional ban on the production, importation, transportation, and sale of alcoholic beverages from 1920 to 1933. Prohibitionists first attempted to end the trade in alcoholic beverages during the 19th century. Led by pietistic Protestants, they aimed to heal what they saw as an ill society beset by alcohol-related problems such as.

The Great Depression began with the Wall Street Crash in October 1929.The stock market crash marked the beginning of a decade of high unemployment, poverty, low profits, deflation, plunging farm incomes, and lost opportunities for economic growth as well as for personal advancement.Altogether, there was a general loss of confidence in the economic future.

Racism in the United States has existed since the colonial era, when white Americans were given legally or socially sanctioned privileges and rights while these same rights were denied to other races and minorities. European Americans—particularly affluent white Anglo-Saxon Protestants—enjoyed exclusive privileges in matters of education, immigration, voting rights, citizenship, land.